Elon Musk and the Bible: Anselm Project v1.8

Anselm Project rebuilt its biblical translation engine (v1.8) with Grok 4.1: a four-step pipeline for literal, consistent, and standardized translations.

I rebuilt the entire translation engine for the Anselm Project Bible. Again. Version 1.8 is a complete rewrite that fixes the problems I talked about in my last few posts, and it does it using a completely different AI model with a much more sophisticated pipeline.

Let me explain what changed and why.

The Problem with Version 1.0

When I released version 1.0, the AI was supposed to translate directly from the Greek and Hebrew source texts I provided. But as the folks on r/AcademicBiblical kindly pointed out, the AI was pulling from tradition instead of strictly translating.

The classic example was Mark 1:1. I fed the system Greek text that reads "Ἀρχὴ τοῦ εὐαγγελίου Ἰησοῦ χριστοῦ" without "υἱοῦ θεοῦ" (the Son of God), but the translation still came back with "the Son of God" in it. The AI recognized the biblical pattern and filled in what it thought should be there, not what was actually there.

Another example was 1 Corinthians 7:36, where the Greek word "ὑπέρακμος" strictly means "beyond the peak" or "past the prime," but traditional translations render it as "past the flower of youth" or "beyond the bloom of age." The AI kept using these interpretive phrases even though they weren't in the Greek. It was cheating to get answers from tradition instead of translating.

Version 1.5 was much better with better documentation of decisions (and much cheaper to produce). However, I still noticed differences in the proper names, which necessitated 1.6.

No matter how forcefully I wrote the prompts, OpenAI's models kept doing this. I needed a different approach. I decided to try throwing the question to a different beast and got interesting results.

Elon Musk and the Bible

I decided to try xAI's Grok 4.1 model. I had been experimenting with it for other parts of the Anselm Project, and I noticed it followed instructions with less creative interpretation than OpenAI's models. It seemed more literal-minded, which is exactly what I needed for biblical translation.

The switch required rewriting the entire codebase. Version 1.5 used OpenAI's API with async HTTP requests through aiohttp. Version 1.8 uses xAI's SDK with their Client library. The architecture is completely different under the hood (not to mention much cheaper for the full-power model).

But more importantly, I redesigned the entire translation pipeline to be more robust.

The Four-Step Translation Pipeline

Version 1.5 had a simple two-step process (thanks to the previous iteration of morphologies and lemma from 1.0): generate multiple translation candidates, then have a judge AI pick the best one. Version 1.8 adds two critical new steps that make the translation both more literal and more readable.

Step One: Generate Translation Candidates

The first step works similar to version 1.5. I send the source text (Greek or Hebrew) to Grok 4.1 with strict instructions to translate only what is lexically present. The prompt explicitly forbids introducing nouns, verbs, clauses, titles, or concepts that aren't in the source text. It also forbids consulting other English translations or importing alternate readings, but also makes zero mention of biblical texts or even what language is going to be handed over.

The system generates multiple translation candidates for each pericope. Each verse gets its own translation plus a rationale explaining the translation decisions made.

Grok 4.1 is much more literal, judging from the literal outputs.

Step Two: Judge Selects Best Translation

A separate judge AI reviews all the candidates and selects the single best translation based on these criteria: literally accessible, ideologically faithful to the source, dignified English, and internally consistent.

The judge also does something new that feeds into step three: it extracts all proper names from the selected translation, including people, places, and divine names. This list becomes crucial for the next phase.

Step Three: Standardize Proper Names

This is entirely new, but a fairly obvious addition. I created a specialized name standardization AI that takes the judge's output and corrects proper name spellings to match modern English Bible translation conventions.

Divine names are handled correctly. YHWH, Yahweh, or Jehovah all get standardized to "the LORD" (with small caps LORD). Elohim becomes "God." Adonai becomes "Lord." Compound names like "YHWH God" become "the LORD God." This follows the standard convention used by major English translations like the ESV, NIV, NASB, and KJV.

Everything gets normalized to the standard modern English Bible spelling during the actual translation process, rather than through post-processing.

The AI provides rationale for each correction, which I found helpful for debugging when names got overcorrected.

Step Four: Polish English Syntax

The final step was the breakthrough that lets me have both literal translation and readable English. The polisher AI receives the literal translation with standardized names and its only job is to rearrange words for natural English syntax.

For example, in Genesis 1:1, Grok 4.1 would, multiple times, translate the famous passage as "In beginning created God..." We understand, but the English was very odd, of course.

The constraints are critical here. The polisher can only rearrange existing words. It cannot change word choices, substitute synonyms, add words, or remove words except for minimal articles and prepositions required by natural English grammar. It must preserve all emphasis and structural patterns from the literal translation.

I can foresee some errors, but one step at a time.

The focus is entirely on subject-verb-object ordering and natural English clause structure. Hebrew and Greek word order often doesn't work in English, so this step makes the translation readable without sacrificing the literal word choices from step one.

For each verse, the polisher explains what syntactic changes it made and why they improve English readability. This creates a transparent audit trail of exactly what got rearranged and why.

Structured Outputs with Pydantic

Version 1.8 uses Pydantic models to enforce structured JSON responses from the AI at every step. And it kicks errors back WAY more often than I would have expected. I had to include retry logic early in the process.

The translation step returns a PericopeTranslationResponse with a list of VerseTranslation objects. The judge returns a PericopeJudgeResponse with the selected verses and extracted proper names. The standardizer returns a NameStandardization with a list of NameCorrection objects. The polisher returns a PericopePolishingResponse with polished verses.

This structure (in theory) eliminates parsing errors and makes the data flow clean and predictable. At least, it should, unless I'm using it wrong. Each step knows exactly what format it's receiving and what format it needs to output.

Massive Parallelization

The other huge improvement in version 1.8 is parallelization. Version 1.5 processed pericopes sequentially, one at a time. Version 1.8 can process up to 80 pericopes simultaneously across multiple books, with a maximum of 240 concurrent API calls. I could probably boost this further, too.

This is controlled by two semaphores. The API semaphore limits concurrent API calls to prevent overwhelming xAI's servers. The pericope semaphore limits how many pericopes can be in various stages of the four-step pipeline at once.

The practical result is that I can now translate Genesis and Exodus at the same time, with multiple pericopes from each book being processed in parallel. The whole Bible can be retranslated in hours instead of days.

Better Error Handling

Version 1.8 includes a retry mechanism for failed pericopes. If a pericope fails for any reason (API timeout, malformed response, network issue), the system saves an error file in the pericope's subdirectory. Later, I could run the script with the --retry-failures flag and it will automatically find all error files and retry just those pericopes.

The system also includes graceful shutdown handling. If you press Ctrl+C during a translation run, it finishes the current pericope cleanly, saves all completed work, and exits. No partial files, no corrupted JSON, no lost work.

The Results

The combination of Grok 4.1's more literal-minded approach and the four-step pipeline has produced noticeably more interesting results. Are they better? In some respects. But I get the impression that they are more honest. The AI still occasionally tries to interpret instead of translate, but the errors are far less frequent and the standardization and polishing steps clean up most of what remains.

Mark 1:1 now translates correctly based on the Greek text I actually provide. The divine name standardization ensures consistent rendering of YHWH as "the LORD" throughout. The syntax polishing makes the English natural without sacrificing literal word choices.

That said, I still can't crack the 1 Corinthians 7:36 egg. Even with all the constraints in the prompts and the four-step pipeline, the AI keeps wanting to render "ὑπέρακμος" with interpretive phrases like "past the bloom" or "overripe" instead of sticking to something more neutral like "beyond her proper time." Unless I specifically tell the AI to act as a hyper-literal translator with no regard for readability, it defaults to traditional interpretive language for this word. It's frustrating, but it shows the limits of what prompting can accomplish when an AI has been trained on traditional translations.

I'm calling this version 1.8 because I know there will be more iterations. The translation cheating problem is minimized but not completely eliminated. I'll keep refining the prompts and the pipeline. But this is a significant improvement over version 1.5, and the foundation is much more solid for future enhancements.

If you want to see the results, check out the Anselm Project Bible reader... soon. It might take me another day or so to get it up. It might be up by the time you read this. Every verse includes access to the morphology, the critical text, parallel passages, and the alternate translation candidates the AI generated. It will give you the raw literal, the rationale, and the rationale of the polishing process, too.

The transparency is there if you want to dig into how it works.

God bless, everyone.

Related Articles

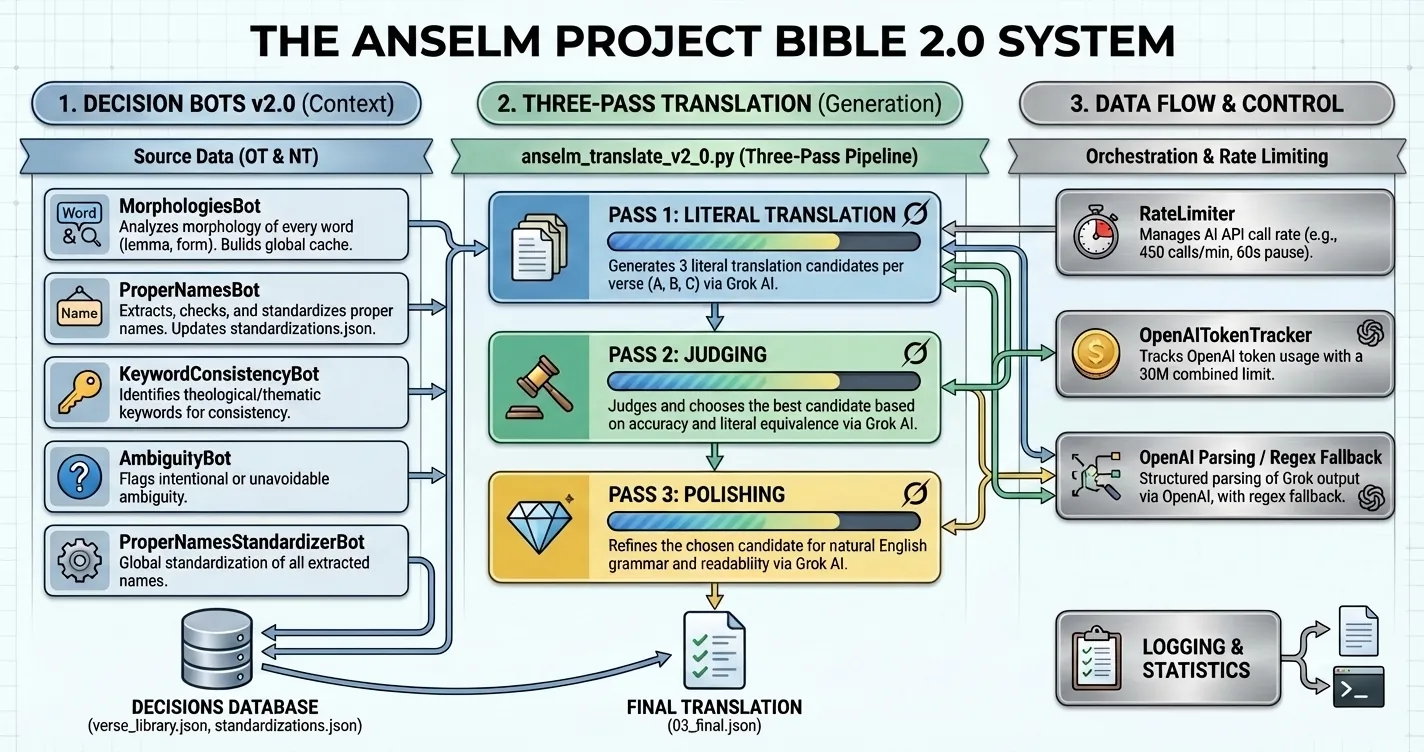

Anselm Project Bible v2.0 Released

Version 2.0 of the Anselm Project Bible introduces a blind-translator pipeline, Grok-powered generat...

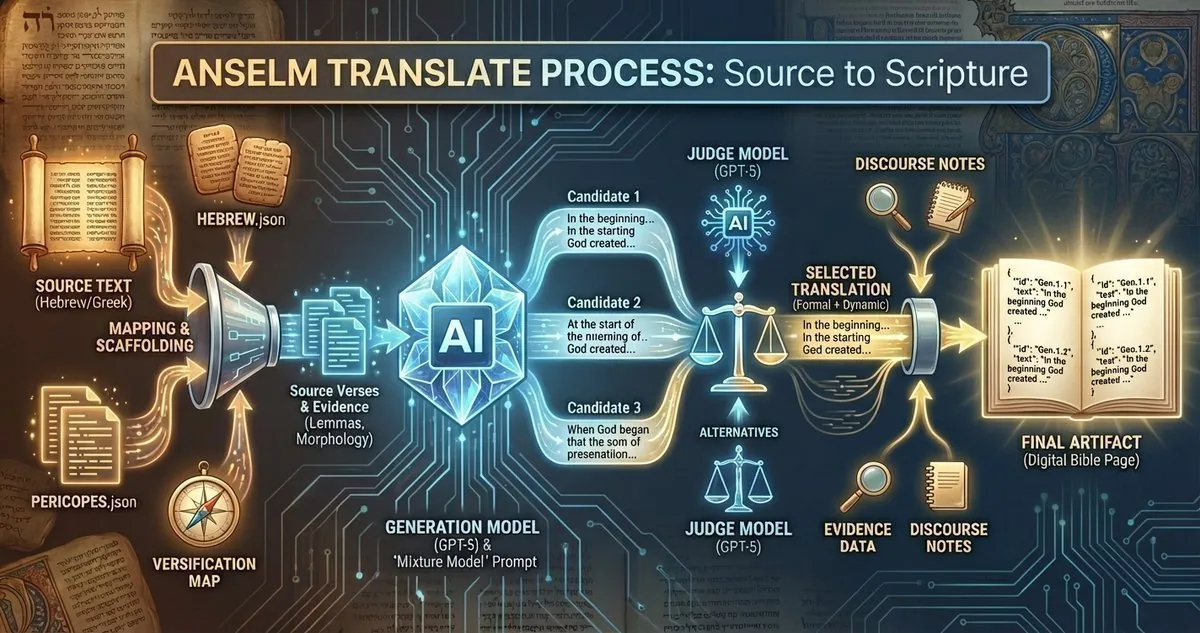

How the Anselm Project Bible Was Made

How the Anselm Project Bible was made: inside the translation pipeline, the mixture model approach, ...

Isaiah 26:3-4 Explained: Hebrew 'Peace, Peace' (Shalom Shalom) and the Everlasting Rock

Explore Isaiah 26:3-4: a Hebrew exegesis of "shalom shalom" and the everlasting Rock, showing how tr...